Storage Information on CS Linux Systems

This page describes various storage facilities provided by LCSR that you can use. However, you can use the same technology to set up your file servers or to allow files on your server or desktop to be available from other systems.

Note that LCSR is moving in the direction of a few file servers, all accessible from all systems that participate in the LCSR Kerberos system. This includes generally available systems such as iLab systems and most research systems.

Note: Our machines use automount, which means the file system is mounted as you access it. To access the nonmounted file system, just access it. For example to access /common/users/$USER, you simply type: cd /common/users/$USER

CS Storage Summary

| Storage | Type | Backed up | Disk Quota Student, Affiliate/PhD, Faculty |

|---|---|---|---|

/common/home/ | SSD | Yes | 50GB / 200GB |

/common/users/ | SSD | Yes | 100GB / 2TB |

/fac/users/ | SSD | Yes | 100GB (Faculty only) |

/freespace/local | SSD | No | None |

/filer/tmp1 | SSD | No | None |

/research/projects/{projectName} | Non SSD | Yes | project-specific |

/research/archive | Non SSD | Yes | None |

Most systems in computer science have access to the following:

- Home directories [

/common/home] for general-access systems are hosted in an SSD (actually NVMe) based file server with 40 Gbps networking. This is intended for projects that need high performance.

Quota: 50 GB for most students, 200 GB for faculty and PhD students, and[/fac/users]home directory has 100 GB of quota. /common/users/$USER. It can supplement your home directory if you need more space. Traditional storage. Quota: 100 GB for most students, 1TB for PhD students and Faculty./filer/tmp1– intended as working space if you need more storage for a short period. SSD storage has no quota but is not backed up. It will be cleared if filled out, and files may be removed at the beginning of every semester and summer.- Local storage, usually on

/freespace/local. This is SSD storage (i.e., reasonably fast), but it is not backed up and may be cleared out when it fills and between semesters. This is intended for short-term working storage. /research/archive. This is for data that funding agencies require to be kept. This will eventually be merged with/common/usersinto a single system for files that don’t need SSD. Please contact us if you need this service.

Essentially, /common/home is the primary storage for all users, including research, with /common/users for files not currently being used heavily. /common/home has fast disks. /common/users will have a larger capacity but with traditional disks.

Technology We use

Our underlying approach is NFS, a network file system that Unix and Linux support, and to some extent, MacOS and Windows. Specifically, we use NFS version 4 with Kerberos authentication. Version 4 with Kerberos allows incorporation of systems run by different system administrators, which may not always have consistent UID and GIDs and may have varying levels of security.

We also recommend setting up the /net virtual file system. That allows you to access files on any system that permits it without getting your system administrator to add a mount. Thus, it provides the illusion that file systems on all of our servers are part of a single, combined namespace.

Systems run by LCSR already have the necessary software. For information on integrating your systems into our Kerberos infrastructure, see Setting up Kerberos and Related Services.

Do you need more space?

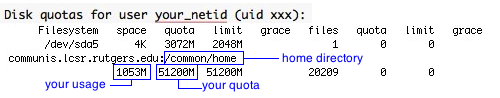

See the Summary section below to determine how much disk quota is available for you. To see how much space is used for each available filesystem,

- open a terminal window or connect via ssh client and

- type:

quota -vs, and you will see something similar to the below

If you run out of disk space in your home directory (User home directory is /common/home), your current usage will be closed to your quota.

To find out where your disk space went, type: du -a ~ | sort -rn | more. This command will list the total space used for each directory you own, with the most usage listed at the top.

In most cases, the hidden .cache, .trashcan, or .local directory is growing out of bounds and needs to be cleaned up, or you forgot to empty your Trash.

If you run out of space in your home directory, please utilize /freespace/local, /common/users/your_netid storage described in sections B and C below.

Storage available with Computer Science

A.Home Directories

Users’ home directories on our generally available systems are on the LCSR machines named communis.lcsr. For Quota info, see the Summary section above. Each machine in its cluster shares the same home directory storage and is accessible at:

/common/homefor iLab and general research machines./fac/usersfor Faculty machines.

These directories are backed up, and lost files are recoverable. Essentially, we keep backups for up to two months and have a fallback position in case of hardware failure.)

Accounts are given home directory quota limits to share this very expensive resource. If your initial quota is too small, consider other shared disks like /common/users, /filer/tmp, and /freespace/local below. If there are justifiable reasons, you can request additional space (please estimate how much more you need, how long you believe you will need it, and a short justification of what you need it for).

B. Shared Local Filesystems

/freespace/local(available only on iLab/Grad/Fac desktops machines)

Most machines have extra nonquota disk space in a partition called/freespace/local. You should note that these filesystem restrictions are below.

– Files are not backed up

– This storage has no quota but is limited to available space available.

– Files in/freespace/localare automatically deleted when machines are re-installed without prior warning.

– All files may be removed between the end and start of a semester or when we run out of space without prior warning.

C. Computer Science Remote Filesystems

/common/home

– This is the default home directory for iLab and general CBIM machines.

– Quotas 50 GB for most users and 200 GB for faculty/PhD students.

– Based on a Linux server using the ZFS file system and NVMe storage.

– It is backed up daily. There are daily snapshots kept for 59 days. These can be used to recover files that were accidentally deleted.

– Researchers may request special project directories./common/users

– There is a 100 GB quota limit. 2TB for PhD students and Faculty.

– Can be accessed in /common/users/your_netid or/common/users/$USER

– It is accessible from all systems participating in LCSR’s Kerberos, i.e., general-access and most research systems.

– This file system has a single daily backup and no snapshot./fac/users

– There is a 100 GB quota limit for Faculty use only.

– Can be accessed in /fac/users/your_netid or/fac/users/$USER

– It is accessible only from faculty systems participating in LCSR’s Kerberos./filer/tmp1

LCSR has a raided filer (so any single disk failure will not cause data loss) with about 7.5 TB of space available. The filesystems are:

– accessible from all generally available systems. This uses SSD storage.

– There is no quota, but it is limited to available space.

– is not backed up.

– is not intended to be permanent storage.

– is cleared when filled and may be cleared at the beginning of every semester and summer.

D. Special Storage For Projects

- Project space on our filesystems

Larger space can be arranged for individuals or groups needing aggressively backed-up space. This space is generally subject to the same policy as our home directories. (Essentially, we keep backups for up to two months and have a fallback position in case of hardware failure.) This space must be requested by the DCS faculty member working on the project to storage technology via CS HelpDesk.

E. Cloud Storage

- Dropbox – Not supported. Use Scarletmail Google Drive. You have 30GB of storage space there instead of 2GB.

- Google Drive.

The university has arranged 30Gb of disk space for Google Drive under the Scarlet Apps system. All CS Linux machines can be linked to Google Drive. Please see Connecting Google Drive with CS Linux Machines. Note that this can only be used if you are using a Gnome graphical interface locally or via a Microsoft Remote Desktop client. Alternatively, you can use rclone</strong (cmd line) or rclone-browser GUI) by following https://rclone.org/drive/ for configuration.

To start rclone-browser, follow:export TERMINAL=/usr/bin/xfce4-terminal

rclone-browser

Note: If you set mount points, set them at /tmp/mountPointName, where mountPointName is your chosen name. Example: your_netid-gdrive - Box.com

The University has arranged a 1TB capacity contract with box.com. To access Box.com, please use rclone (cmd line) or rclone-browser (GUI) by following https://rclone.org/box/ for configuration.

To start rclone-browser, follow:export TERMINAL=/usr/bin/xfce4-terminal

rclone-browser

Note: if you set mount points up, set it at /tmp/mountPointName, where mountPointName is your chosen name. Example: your_netid-box - If there are other cloud services you want to access, contact us. Tools are available for many services, but many of them aren’t things we’d like to make generally available.

- If you use VMs or storage in Amazon or similar environments, we’re willing to look at setting up links from systems here to them. Linux tools are used to access Amazon file systems and their competitors.

F. Other StorageAvailable

If you maintain your own disk space, would like to access it from our machines, or want to access our file systems from home computers, the following are a few options.

- sshfs

LCSR has installed sshfs on our primary clusters. You can also install it on the system you run, as well as on home systems. This allows you to mount file systems to which you have access on any computer where you can log in via SSH. Performance is at least as good as an NFS mount and often better. In most cases, this is a better option than WebDAV. For details, see accessing files remotely. - /net/yourhost/…

On the faculty and research machines, we have enabled /net automounting. So if your hostname is “myhost”, and you NFS export the filesystem “/my/directory/” to the faculty or research machines, you will be able to automount your files by connecting to “/net/myhost/my/directory”. See sharing files for more details. - See our how-to page to learn more about available File Sharing options.

For help with our systems or immediate assistance, visit LCSR Operator at CoRE 235 or call 848-445-2443. Otherwise, see CS HelpDesk. Don’t forget to include your NetID along with descriptions of your problem.